In parts one and two I covered making the PPP connection, firewall and the DHCP server. This just leaves DNS.

Unbound

FreeBSD has stopped providing a proper DNS server (BIND – the Berkeley Internet Name Daemon) in the base system, replacing it with “unbound”. This might be all you need if you just want to pass DNS queries through to elsewhere and have them cached. It will even allow you to configure your local name server for hosts on the LAN.

To kick off unbound once run “service local_unbound onestart“. This will clobber your /etc/resolv.conf file but it keeps a backup – note well where it’s put it! Probably /var/backups/resolv.conf.20260103.113619 (where the suffix is the date and a random number)

For some strange reason (possibly Linux related) the configuration files for unbound are stored in /var/unbound – notably unbound.conf. By default it will only resolve addresses for localhost, so you’ll need to do a bit of tweaking. Assume your LAN is 192.168.1.0/24 and this host (the gateway/router) is on 192.168.1.2 as per the earlier articles. Add the lines to the server section so it becomes:

server:

username: unbound

directory: /var/unbound

chroot: /var/unbound

pidfile: /var/run/local_unbound.pid

auto-trust-anchor-file: /var/unbound/root.key

interface: 192.168.1.2

interface: 127.0.0.1

access-control: 127.0.0.0/8 allow

access-control: 192.168.1.0/24 allow

# Paranoid blocking of queries from elsewhere

access-control: 0.0.0.0/0 refuse

access-control: ::0/0 refuse

There is a warning at the top of the file that it was auto-generated but it’s safe to edit manually in this case. The interface lines are, as you might expect, the explicit interfaces to listen on. The access-control lines are vital, as listening on an interface doesn’t mean it will respond to queries on that subnet. The paranoid blocking access-control lines are probably redundant unless you make a slip-up in configuring something somewhere else and a query slips in through the back door.

Once configured you can now use 192.168.1.2 as your LAN’s DNS resolver by setting it isc-dhcpd to issue it. A add local_unbound_enable="YES" to your /etc/rc.conf file to have it load on boot.

BIND

Unbound is a lightweight local DNS resolver, but you might want full DNS. I know I do. Therefore you’ll need to install BIND (aka named).

We’re actually looking for BIND9, so search packages for the version you one. This will currently be bind918, bind920 or bind9-devel. Personally I’ll leave someone else to play with the latest version and go for the middle (bind9 version 20).

pkg install bind920

You’ll then need to generate a key to control it using the rndc utility (more on that later)

rndc-confgen -a

Next we’ll need to edit some configuration files:

cd /usr/local/etc/namedb

Here you should find named.conf, which is identical to named.conf.sample in case it’s missing or you break it. The changes are minor.

Around line 20 there’s the listen-on option. Set this to:

listen-on { 127.0.0.1; 192.168.1.2;};

Again, this assumes that 192.168.1.2 is this machine. That’s all you need to do it you want it to provide services to the LAN. While we’re in the options section change the zone file format from modern binary to text. Binary is quicker for massive multi-zone DNS servers, but text is traditional and more convenient otherwise.

masterfile-format text;

If you’re going to do DNS properly you need to configure the local domain. At the the end of the file add the following as appropriate. In this series we’re assuming your domain is example.com and this particular local site is called mysite – i.e. mysite.example.com. All hosts on this site will therefore be named as jim.mysite.example.com, printer.mysite.example.com and so on.

zone "mysite.example.com"

{

type primary;

file "/usr/local/etc/namedb/primary/mysite.example.com";

};

zone "1.168.192.in-addr.arpa"

{

type primary;

file "/usr/local/etc/namedb/primary/1.168.192.in-addr.arpa";

};

The first file is the zone file, mapping hostnames on to IP addresses. The second is the reverse lookup file. They will look something like this:

; mysite.example.com

;

$TTL 86400 ; 1 day

mysite.example.com IN SOA ns0.mysite.example.com. hostmaster.example.com. (

2006011238 ; serial

18000 ; refresh (5 hours)

900 ; retry (15 minutes)

604800 ; expire (1 week)

36000 ; minimum (10 hours)

)

@ NS ns0.mysite.example.com.

adderview1 A 192.168.1.204

c5750 A 192.168.1.201

canoninkjet A 192.168.1.202

dlinkswitch A 192.168.1.5

gateway A 192.168.1.2

eap245 A 192.168.1.6

eap265 A 192.168.1.8

fred-pc A 192.168.1.101

ns0 CNAME gateway

This is the zone file. I’m not going to explain everything about it here, just that this is a working example and the main points about it.

The first lines, starting with a ‘;’ are comments.

Next comes $TTL, which sets the default time-to-live for everything that doesn’t specify differently, and is basically the number of seconds that systems are supposed to cache the result of a lookup. You might want to reduce this to something like 30 seconds if you’re experimenting. You must specify the default TTL first thing in the file.

Then comes the SOA (Start of Authority) for the domain. It’s specifying the main name server (ns0.mysite.example.com) and the email address of the DNS administrator. However, as ‘@’ has a special meaning in zone files it’s replaced by a dot – so it really reads hostmaster@mysite.example.com. I’ve never figured out how you can have an email address with a dot in the name.

The other values are commented – just use the defaults I’ve given or look them up and tweak them. The only important one is the first number – the serial. This is used to identify which is the newest version of the zone file when it comes to replication, and the important rule is that when you update the master zone file you need to increment it. There’s a convention that you number them YYYYMMDDxx where xx allows for 100 revisions within the day. But it’s only a convention. If you only have one name server, as here, then it’s not important as it’s not replicating.

Next we define the name servers for the domain with NS records. We’ve only got one, so we only have one NS record. The @ is a macro for the current “origin” – i.e. mysite.example.com.

Note well the . on the end of names. This means start at the root – it’s important. Some web browsers allow you to omit it in URLs, and guess you always mean to start at the root – but DNS doesn’t!

Then come the A or Address records. They’re pretty self explanaitory. Because the “origin” is set as mysite.example.com the first line effectively reads:

adderview1.mysite.example.com A 192.168.1.204

This means that if someone looks up adderview1.mysite.example.com they get the IP address 192.168.1.204. Simple! You can have an AAAA record that gives the IPv6 address, but I won’t cover that here.

The last line is line an A record but is a CNAME, which is defining an alias. ns0 is aliased to gateway, which ultimately ends up as being 192.168.1.2 – i.e. the name of our router/DNS server. There is nothing stopping you from having multiple A records pointing to the same IP address – and in some ways it’s better to use an absolute address. It comes down to how you want to manage things, and my desire to get a CNAME example in here somewhere.

The corresponding reverse lookup file goes like this:

; 1.168.192.in-addr.arpa

;

$TTL 86400 ; 1 day

@ IN SOA ns0.mysite.example.com. hostmaster.example.com. (

2006011231 ; serial

18000 ; refresh (5 hours)

900 ; retry (15 minutes)

604800 ; expire (1 week)

36000 ; minimum (10 hours)

)

@ IN NS ns0.mysite.example.com.

2 PTR gateway.mysite.example.com.

6 PTR eap245.mysite.example.com.

8 PTR eap265.mysite.example.com.

101 PTR fred-pc.mysite.example.com.

201 PTR c5750m.mysite.example.com.

202 PTR canoninkjet.mysite.example.com.

204 PTR adderview1.mysite.example.com.

As you can see, it’s pretty much the same until you get to the PTR records. These are like A records but go in reverse. In case you’re wondering about the name, it’s important. Note it’s the first three bytes of the subnet but backwards. The last byte is the first part of the PTR line, and the last part is the FQDN to be returned if you do a reverse lookup on the IPv4 address.

Therefore, if you reverse lookup 192.168.1.101 it will look in 1.168.192.in-addr.arpa for a PTR record with 101 as the key and return fred-pc.mysite.example.com.

This all goes back to the history of the Internet, or more precisely, it’s precursor caller ARPAnet. The .arpa TLD was supposed to be temporary during the transition, but it stuck around. Just do it the way I’ve said o or fall flat on your face.

You can have a reverse lookup for IPv6 using a ip6.arpa file, but I’m not going to cover that this time.

Once you’ve made all these changes and set up your zone file, just kick it off with “service named start” (or onestart). To make it start on boot add named_enable=”yes” to /etc/rc.conf

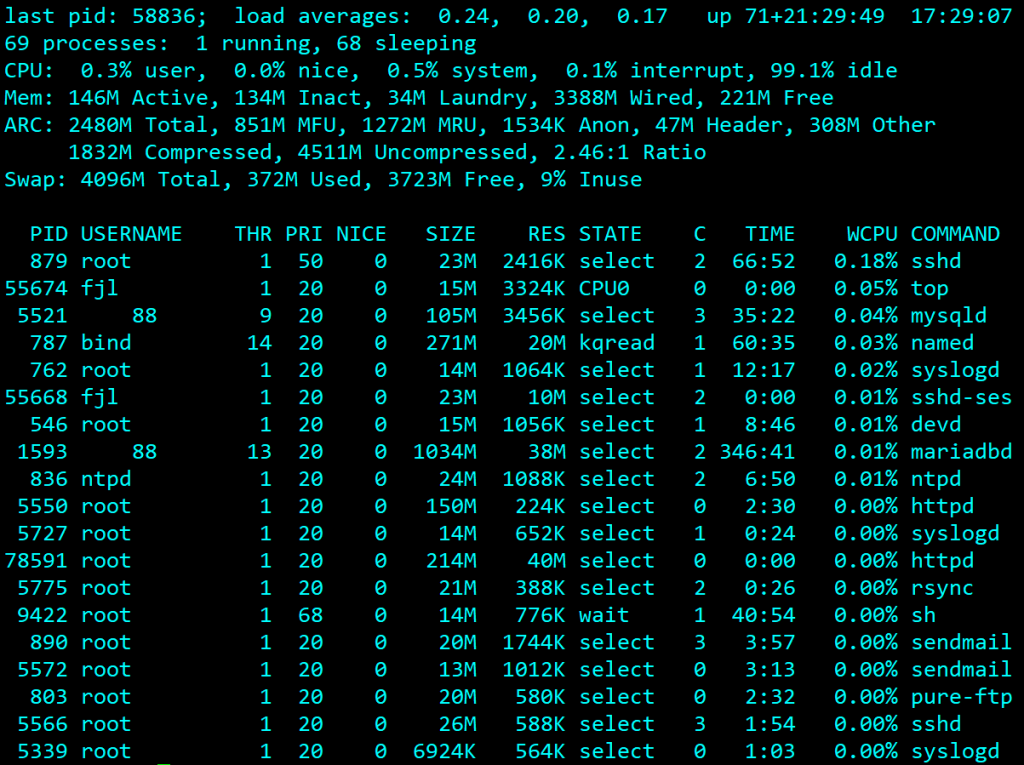

Debugging

You can test it’s working with “host gateway.mysite.example.com 127.0.0.1” and “host gateway.mysite.example.com 192.168.1.2” – both should return 192.168.1.2.

Error messages can be found in /var/log/messages – however they’re not always that revealing! Fortunately BIND comes with some useful checking tools, such a named-checkzone.

named-checkzone mysite.example.com /usr/local/etc/namedb/primary/mysite.example.com

This sanity checks the zone file (second argument) is a proper zone file for the domain name specified in the first argument. We’ve called the file after the domain, which can be confusing but has many advantages in other situations.

You can also check the reverse lookup file in the same way:

named-checkzone 1.168.192.in-addr.arpa /usr/local/etc/namedb/primary/1.168.192.in-addr.arpa

It’ll either come up with warnings or errors, or say it would have been loaded with an OK message.

Next Stage

In Part 2 I explained how to set up the OpenBSD DHCP daemon and here I’ve explained unbound as well as BIND. But for redundancy, the full ISC DHCP Daemon and BIND are necessary as they are able to replicate so one server can carry on if the other fails. That’s the next installment.