I was asked me to explain basic Unix shell file manipulation commands, so here goes.

If you’re familiar with MS-DOS, or Windows CMD.EXE and PowerShell (or even CP/M) you’ll know how to manipulate files and directories on the command line. It’s tempting to think that the Unix command line is the same, but there are a few differences that aren’t immediately apparent.

There are actually two main command lines (or shells) for Unix: sh and csh. Others are mainly compatible with these two, with the most common clones being bash and tcsh respectively. Fortunately they’re all the same when it comes to basic commands.

Directory Concepts

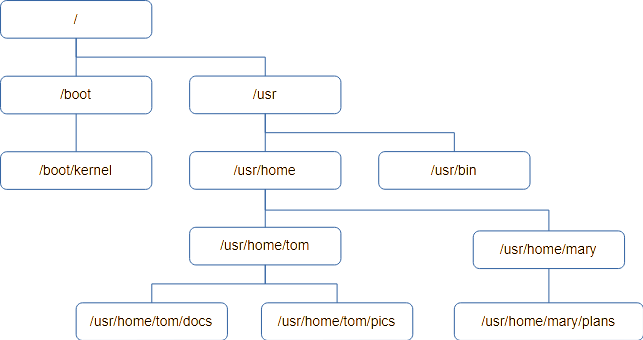

Files are organised into groups called “directories”, which are often called “Folders” on Macintosh and Windows. It’s not a great analogy, but it’s visual on a GUI. Unlike the real world, a directory may contain additional directories as well as files. These directories (or sub-directories) can also contain files and more directories and so on. If you drew a diagram you’d end up with something looking like a tree, with directories being branches coming off branches and the files themselves being leaves.

All good trees start with a root from which the rest branches off, and this is no different. The start of a Unix directory tree is known as the root.

Unix has a concept called the Current Working Directory. When a program is looking for a file it is assumed it will be found in the Working Directory if no other location is specified.

Users on a Unix system have an assigned Home Directory, and their Working Directory is initially set to this when the log on.

Users may create whatever files and sub-directories they need within their Home Directory, and the system will allow the do whatever they want with anything they create as it’s owned by them. It’s possible for a normal user to see other directories on the system, in fact it’s necessary, but generally they won’t be able to modify files outside their home directory.

Here’s an example of a directory tree. It starts with the root, /, and each level down adds to the directory “path” to get to the directory.

If you want to know what your current working directory is, the first command we’ll need is “pwd” – or “Print Working Directory”. If you’re ever unsure, use pwd to find out where you are.

Unix commands tend to be short to reduce the amount of typing needed. Many are two letters, and few are longer than four.

The thing you’re most likely to want to do is see a list of files in the current directory. This is achieved using the ls command, which is a shortened form of LiSt.

Typing ls will list the names of the all the files and directories, sorted into ASCII order and arranged into as many columns as will fit on the terminal. You may be surprised to see files that begin with “X” are ahead of files beginning with “a”, but upper case “X” has a lower ASCII value than lower case “a”. Digits 0..9 have a lower value still.

ls has lots of flags to control it’s behaviour, and you should read the documentation if you want to know more of them.

If you want more detail about the files, pass ls the ‘-l’ flag (that’s lower-case L, and means “long form”). You’ll get output like this instead:

drw-r----- 2 fjl devs 2 Aug 28 13:17 Release

drw-r----- 2 fjl devs 29 Dec 26 2019 Debug

-rw-r----- 1 fjl devs 2176 Feb 17 2012 editor.h

-rw-r----- 1 fjl devs 28190 Feb 7 2012 fbas.c

-rw-r----- 1 fjl devs 10197 Feb 17 2012 fbas.h

-rw-r----- 1 fjl devs 5590 Feb 17 2012 fbasexpr.c

-rw-r----- 1 fjl devs 7556 Feb 3 2012 fbasheap.c

-rw-r----- 1 fjl devs 7044 Feb 4 2012 fbasio.c

-rw-r----- 1 fjl devs 4589 Feb 3 2012 fbasline.c

-rw-r----- 1 fjl devs 4069 Feb 3 2012 fbasstr.c

-rw-r----- 1 fjl devs 4125 Feb 3 2012 fbassym.c

-rw-r----- 1 fjl devs 13934 Feb 3 2012 fbastok.c

drw-r----- 3 fjl devs 3 Dec 26 2019 ipch

-rw-r----- 1 fjl devs 3012 Feb 17 2012 token.h

:I’m going to skip the first column for now and look at the third and fourth.

fjl devsThis shows the name of the user who owns the file, followed by the group that owns the file. Unix users can be members of groups, and sometimes it’s useful for a file to be effectively used by a group rather than one user. For example, if you have an “accounts” group and all your accounts staff belong to it, a file can be part of the “accounts” group so everyone can work on it.

Now we’ve covered users and groups we can return to the first column. It shows the file flags, which are various attributes concerning the file. If there’s a ‘-’ then the flag isn’t set. The last nine flags are three sets of three permissions for the file.

The first set are the file owner’s permissions (or rights to use the file).

The second set are the file group’s permissions.

The third are the permissions for any user who isn’t either the owner or in the file’s group

Each group of three represents, in order:

r – Can the file be read, but not changed.

w – can the file be written to. If not set it means you can only read it.

x – can the file be run (i.e. is it a program)

So:

– rw- — — means only the user can read/write the file.

– rw- r– — means the file can be read by anyone in it’s group but only written to by the owner.

– rwx r-x — means the file is a program, probably written by its owner. Others in the group can run it, but no one else can even read it.

There are other special characters that might appear in the first filed for advanced purposes but I’m covering the basics here, and you could write a book on ls.

I’ve missed off the first ‘-’, which isn’t a permission but indicates the type of the file. If it’s a ‘-’ it’s just a regular file. A ‘d’ means it’s actually a directory. You’ll sometimes see ‘c’ and ‘s’ on modern systems, which are normally disk drives and network connections. Unix treats everything like a file so disk drives and network sockets can be found in the directory tree too. You’ll probably see ‘l’ (lower case L) which means it’s a symbolic link – a bit like a .LNK file in Windows.

This brings us to the second column, which is a number. It is the number of times the file exists in the directory tree thanks to their being links, and most cases this will be one. I’ll deal with links later.

The last three columns should be easy to guess: Length, date and finally the name of the file; at least in the case of a regular file.

There are many useful and not so useful options supported by ls. Here are a few that might be handy.

-d

By default, if you give ls a directory name it will show you the contents of the directory. If you want to see the directory itself, most likely because you want to see its permissions, specify -d.

-t

Sort output by time instead of ASCII

-r

Reverse the order of sort. -rtl is useful as it will sort your files with the newest at the end of the list.

-h

Instead of printing a file size in the bytes, which could be a very long number, display in “human readable” format, which restricts it to three characters followed by a suffix: B=bytes, K=Kb, M=Mb and so on.

-F

This is very handy if you’re not using -l, as with just the name printed you can’t tell regular and special files apart. This causes a single character to be added to the file name: ‘*’ means it’s a program (has the x flag set), ‘/’ means it’s a directory and ‘@’ means it’s a symbolic link. Less often you’ll see ‘=’ for a socket, ‘|’ for a FIFO (obsolete) and ‘%’ for a whiteout file (insanity involving union mounts).

Finally, ls takes arguments. By default it lists everything if you give it a list of files and directories, it will just list them.

Where src is a directory, ls src will list the files in that directory. Remember ls -d if you just want information on the directory?

ls srcList everything in the src and obj directories:

ls src objNow you can find the names of the files, how do you look at what’s in them? To display the contents of a text file the simple method is cat.

cat test.cThis prints the contents of test.c. You might just want to see the first few lines, so instead try:

head test.cOnly the first ten lines (by default) are printed.

If you want to see the last ten lines, try:

tail test.cIf you want to go down the file a screen full at a time, use.

more test.cIt stops and waits for you to press the space bar after every screen. If you’ve read enough, hit ‘q’ to quit.

less test.cThis is the latest greatest file viewer and it allows you to scroll up and down a file using the arrow keys. It’s got a lot of options.

So far we’ve stayed in our home directory, where we have kept all our files. But evenrtually you’re going to need to organise your files in a hierarchical structure in directories.

To make a directory called “new” type:

mkdir newThis is an mnemonic for “make directory”.

To change your working directory use the chdir command (Change Directory)

chdir newMost people use the abbreviated synonym for chdir, “cd”, so this is equivalent:

cd newOnce you’re there, type “pwd” to prove we’ve moved:

pwdIf you type ls now you won’t see any files, because it’s empty.

You can also specify the directory explicitly, such as:

cd /usr/home/fred/newIf you don’t start with the root ‘/’, cd will usually start looking for the name of the new directory in the current working directory.

To move back one level up the directory level use this command:

cd ..You’ll be back in your home directory.

To get rid of the “new” directory use the rmdir command (ReMove DIRectory)

rmdir newThis only works on empty directories, so if there were any files in it you’d have to delete them first. There are other more dangerous commands that will destroy directories and all their contents but it’s better to stick with the safer ones!

To remove an individual file use the rm (ReMove) command, in this case the file being named “unwanted”:

rm unwantedNormally files are created by applications, but if you want a file to experiment on the easiest way to create one is “touch filename”, which creates an empty file called “filename”. You can also use the echo command:

echo “This is my new text file, do you like it?” > myfile.txtEcho prints stuff to the screen, but “> myfile.txt” tells Unix to put the output of the echo command into “myfile.txt” instead of displaying it on the screen. We’ll use “echo” more later.

You can display the contents with:

cat myfile.txtOne thing you’re going to want to do pretty soon is copy a file, which is achieved using the cp (CoPy) command:

cp myfile.txt copy-of-myfile.txtThis makes a copy of the file and calls it copy-of-myfile.txt

You can also copy it into a directory

mkdir newcp myfile.txt newTo see it there, type:

ls -l newTo see the original and the copy, try:

ls - newfile.txt newIf you wanted to delete the copy in “new” use the command:

rm new/myfile.txtPerhaps, instead of copying your file into “new” you wanted to move it there, so you ended up with only one copy. This is one use of the mv (MoVe) command:

mv myfile.txt newThe file will disappear from your working directory and end up in “new”.

How do you rename a file? There’s no rename command, but mv does it for you. When all is said and done, all mv is doing is changing the name and location of a file.

cd new

mv myfile.txt myfile.textThat’s better – much less Microsoft, much more Unix.

Wildcards

So far we’ve used commands on single files and directories, but most of these commands work with multiple files in one go. We’ve given them a single parameter but we could have used a list.

For example, if we wanted to remove three files called “test”, “junk” and “foo” we could use the command:

rm test junk fooIf you’re dealing with a lot of files you have have the shell create a list of names instead of typing them all. You do this by specifying a sort of “template”, and all the files matching the template will be added to the list.

This might seem the same as Windows, but it’s not – be careful. With Windows the command does the pattern matching according to its context, but the Unix shell has no context and you may end up matching more than you intended, which is unfortunate if you’re about to delete stuff.

The matching against the template is called globbing, and uses the special characters ‘*’ and ‘?’ in it’s simplest form.

‘?’ matches any single character, whereas ‘*’ matches zero or more characters. All other characters except ‘[‘ match themselves. For example:

“?at” would match cat, bat and rat. It would not match “at” as it must have a first character. Neither will it match “cats” as it’s expecting exactly three characters.

“cat*” would match cat, cats, caterpillar and so on.

“*cat*” would match all of the above, as well as “scatter”, “application” and “hellcat”.

You can also specify a list of allowable letters to match between square brackets [ and ], which means any single character will do. You can specify a range, so [0-9] will match any digit. Putting a ‘!’ in front negates the match, so [!0-9] will match any single character that is NOT a digit. If you want to match a two-digit number use [0-9][0-9].

To test globbing out safely, I recommend the use of the echo command for safety. It works like this:

echo Hello world

This prints out Hello world. Useful, eh? But technically what it’s doing is taking all the arguments (aka parameters) one by one and printing them. The first argument is “Hello” so it prints that. The second is “world” so it prints a space and prints that, until there are no arguments left.

Suppose we type this:

echo a*The Unix shell globs it using the * special character produces a list of all files that start with the letter ‘a’.

You can use this, for example, to specify all the ‘C’ files ending in .c:

echo *.cIf you want to include .h files in this, use

echo *.c *.hPractice with echo to see how globbing works as it’s non-destructive!

You can also use ls, although this goes on to expand directories into their contents, which can be confusing.

When you have a command that has a source and destination, such as cp (CoPy), they will interpret the everything in the list as a file to be processed apart from the last, which it will expect to be a directory. For example:

cp test junk foo rubbishWill copy “test”, “junk” and “foo” into an existing directory rubbish.

Now for a practical example. Suppose you have a ‘C’ project where everything is in one directory. .c files, .h files, .o files as well as the program itself. You want to sort this out so the source is in one directory and the objects in another.

Create two directories like this:

mkdir src obj

mv *.c *.h src

mv *.o objAll done!